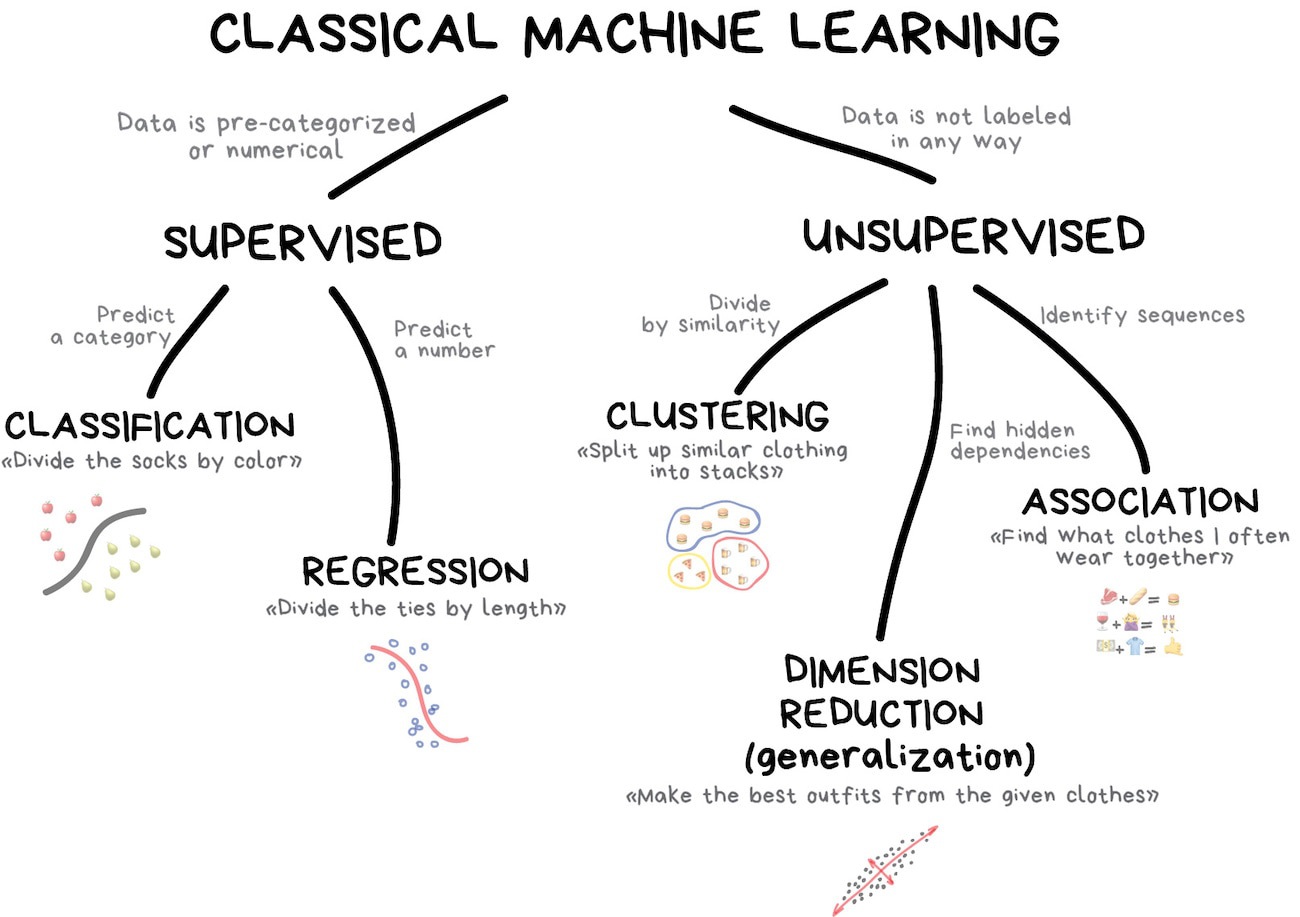

In gestalt I am interested in finding the model that presents the best combination of predictive accuracy (normed against chance) and parsimony, as indexed by the D statistic. I don’t use my personal finite time doing anything other than ODA. This entry was posted in Miscellaneous Statistics by Andrew. conceptual analogue of quantum mechanics.”īut, hey, something can be hyped and still be useful, so who knows? I’ll leave it for others to make their judgments on this one. It seems like a lot of hype to me: “discovered. So far, based on given information, it sounds pretty appealing – do you see any pitfalls? – would you recommend it for using in data analysis when I want to achieve accurate predictions? I found a paper in which a comparison of several machine learning algorithms reveals that a classification tree analysis based on ODA approach delivers best classification results (compared to binary regression, random forest, SVM, etc.) Maximizing Predictive Accuracy was written as a means of organizing and making sense of all that has so-far been learned about ODA, through November of 2015. Understanding of ODA methodology skyrocketed over the next decade, and 2014 produced the development of novometric theory – the conceptual analogue of quantum mechanics for the statistical analysis of classical data.

When the first book on ODA was written in 2004 a cornucopia of indisputable evidence had already amassed demonstrating that statistical models identified by ODA were more flexible, transparent, intuitive, accurate, parsimonious, and generalizable than competing models instead identified using an unintegrated menagerie of legacy statistical methods.

Discovered in 1990, the first and most basic ODA model was a distribution-free machine learning algorithm used to make maximum accuracy classifications of observations into one of two categories (pass or fail) on the basis of their score on an ordered attribute (test score). In the Optimal (or “optimizing”) Data Analysis (ODA) statistical paradigm, an optimization algorithm is first utilized to identify the model that explicitly maximizes predictive accuracy for the sample, and then the resulting optimal performance is evaluated in the context of an application-specific exact statistical architecture. On the website you find a lot of material for Optimal (or “optimizing”) Data Analysis (ODA) which is described as: